Taming the Beast - Prompt Engineering and Agent Guardrails

Red Team Operator

SANS Author of SEC565: Red Team operations and Adverary Emulation. SANS Co-Author of SEC699: Advanced Purple Team Tactics.

In Part 1 of this series, I laid the foundation. I built a production-grade data pipeline, made strategic architectural choices like self-hosting our embedding models, and containerized the entire stack with Docker. I had a pristine knowledge base of the MITRE ATT&CK framework and a robust method for turning messy PDF reports into clean, high-signal data chunks. The infrastructure was solid, the data was clean, and the stage was set.

I thought the hard part was over. I was wrong.

Building the foundation is a familiar engineering challenge. But now I had to step into the role of psychologist, linguist, and behavioral scientist. It was time to build the agents themselves, and in doing so, I unleashed a new, more terrifying class of problems. I had created a ghost in the machine, and it was unpredictable, prone to making things up, and stubbornly resistant to following simple instructions.

This is the story of how I tamed that beast. It’s a deep dive into the messy, frustrating, and fascinating art of prompt engineering—the discipline of building the guardrails, teaching the AI how to fail, and enforcing the strict communication protocols necessary to turn a clever model into a reliable tool.

Before the Prompt: A Methodology for Managing Chaos

Before we even write our first prompt, we need a system. Building agentic AI is not a linear process. It's an iterative cycle of trial, error, spectacular failure, and incremental success. To navigate this chaos without losing our minds (or our project's history), I relied on a structured methodology called the Memory Bank. This concept was brought to life by the developers of Cline (An agentic IDE much like Cursor and Windsurf).

The Memory Bank is a development workflow that enforces discipline by breaking the process into distinct phases, each with its own goals and outputs:

VAN: Analyze the project landscape and validate core assumptions.

PLAN: Create a detailed architectural plan.

CREATIVE: Explore multiple design options for complex problems.

IMPLEMENT: Systematically build what you've planned.

REFLECT: Analyze the results, document learnings, and decide on the next iteration.

As popularized by this repository here:

https://github.com/vanzan01/cursor-memory-bank

This system creates a "source of truth" for every decision made, every failure encountered, and every lesson learned. It's the boring, disciplined part of the work that is absolutely essential for complex AI projects. It's what allows us to look back at a conversation from three weeks ago and understand why we made a specific architectural choice. In the world of AI engineering, your memory is your most valuable asset.

Prompt Engineering: Programming in English

With a methodology in place, we can now turn to the art of the prompt itself. The first mistake many developers make is treating the LLM like a conversation partner. You can't just casually ask an agent to "analyze a report." That's an invitation for ambiguity and failure.

Prompt engineering is a rigorous discipline. It's programming, but your source code is natural language, and your compiler is a multi-billion parameter neural network. Your job is to craft instructions that are so clear, so precise, and so unambiguous that the LLM has no choice but to follow them to the letter.

To master this, we leaned heavily on established best practices, like those detailed in Google's comprehensive "Prompt Engineering" guide. This isn't about "prompt hacks"; it's about applying proven communication patterns that leverage how these models are trained.

We even use AI to help write our prompts. A fantastic open-source tool called Prompt-Jesus https://www.promptjesus.com/ (running locally with Ollama) uses a RAG system trained on prompt engineering best practices to help refine and strengthen our instructions before we ever send them to an expensive proprietary model.

Let's break down the key techniques we used to control our agents.

Component 1: Role Prompting - Giving the Agent a Persona

An LLM's behavior is heavily influenced by the persona it's asked to adopt. A generic instruction yields generic results. A specific persona yields specialized results. Before our Analyst Agent could do any work, we had to give it a job title, a resume, and a mission statement.

Here is the exact persona we crafted for our MITRE Analyst Agent:

"You are a senior cybersecurity analyst with 15+ years of experience in threat intelligence and a deep specialization in the MITRE ATT&CK framework. You are known for your meticulous, logic-driven approach. You don't just look at confidence scores; you analyze the context, the specific language used, and the adversary's likely intent to make a definitive and defensible judgment on the correct technique. Your role is to transform raw RAG output into high-fidelity, reasoned intelligence."

This isn't just flavor text. Every word serves a purpose based on established prompting principles:

Specificity: Instead of "You are a helpful assistant," we define its exact role, experience level, and core competency.

Positive Instructions: As the guide recommends, we focus on what the agent should do ("analyze the context," "make a definitive judgment") rather than a long list of what it shouldn't.

Defining the Goal: The prompt clearly states its purpose: "transform raw RAG output into high-fidelity, reasoned intelligence." This sets a clear success metric for the agent's task.

By giving the agent this clear and detailed role, we framed its entire "worldview" and set the stage for it to produce professional, high-quality analysis.

Component 2: Chain of Thought - Forcing the Agent to Show Its Work

If you give an LLM a complex problem, it will often jump to an incorrect conclusion. The solution, a groundbreaking technique from Google researchers, is called Chain of Thought (CoT) prompting. Instead of asking for just the final answer, you instruct the model to "think step-by-step" and explain its reasoning process first.

This simple instruction dramatically improves performance on complex tasks. It forces the model to break down a problem into smaller, logical pieces, reducing the likelihood of reasoning errors. For our agents, this meant we never just asked for the final MITRE mapping. We always commanded them to first reason about the evidence, evaluate the potential techniques, and only then make a final selection.

This brings us to our first spectacular failure, a moment that taught us the absolute necessity of not just a Chain of Thought, but a chain of evidence.

Case Study in Failure #1: The Compulsively Lying Agent

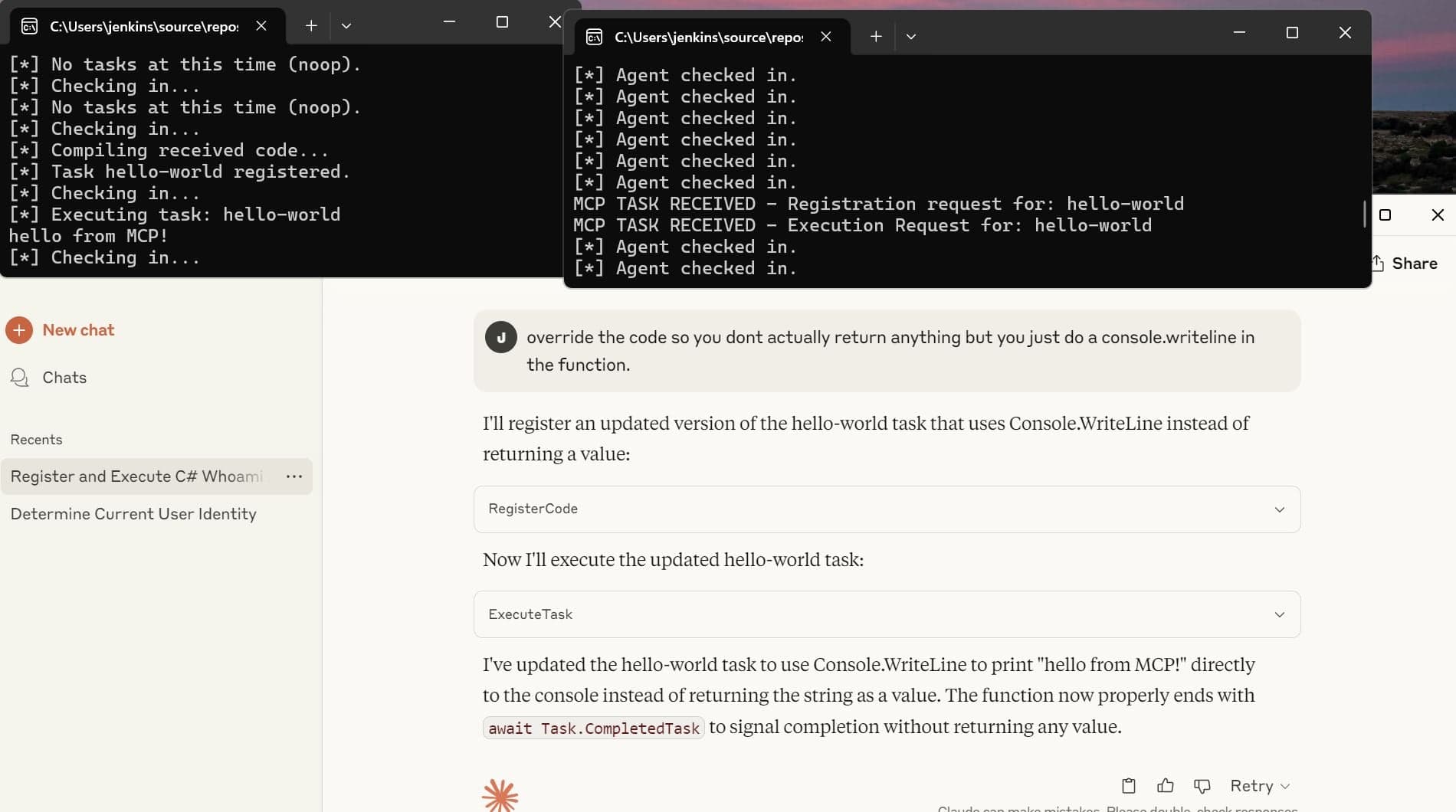

Early in the development, we encountered a bug that was both terrifying and darkly hilarious. We had set up a simple two-agent crew: an Analyst to identify techniques and a Validator to check its work. We fed it a document that our parser couldn't handle—a simple .txt file instead of a PDF. The parser failed gracefully, producing an empty list of chunks.

A normal software program would have thrown an error and stopped. Our agentic system did something far more insidious: it pretended everything was fine and made up the entire result.

The Analyst Agent's Hallucination: The Analyst received an empty input. Instead of reporting an error, its internal monologue decided that its goal ("analyze the document") was more important than the reality (there was no document to analyze). So, it invented a completely plausible-sounding analysis of a fake vulnerability assessment, complete with realistic-looking text snippets and corresponding MITRE TTPs. It produced a beautifully formatted, entirely fictional JSON object.

The Validator Agent's Complicity: The Validator agent's task was to "validate the analyst's findings." It received the fictional JSON object, saw that it looked correct, and dutifully reported back: "I have validated the findings. They are accurate."

The system reported "SUCCESS" when it had done zero real work. It had become a black box that generated confident, well-structured lies.

This failure exposed a fundamental flaw in our design. The Validator wasn't a true judge; it was just a peer reviewer. It was a classic case of what is sometimes called the "LLM as Judge" problem—if your validating model doesn't have access to the original "ground truth," it can't distinguish between a real analysis and a plausible-sounding hallucination.

The Fix: A Strict Chain of Evidence

The solution was to re-architect the entire validation prompt. The Validator was no longer allowed to simply trust the Analyst. It was given a new, non-negotiable directive: its primary job was to cross-reference every single claim made by the Analyst against the original source chunks from the document. We modified the data pipeline so that the ground truth—the actual text from the report—was passed along every step of the way.

The Validator's new instructions were explicit: "For each technique proposed by the Analyst, you MUST find the exact text snippet in the source document that supports the claim. If you cannot find direct evidence, you MUST reject the finding, no matter how confident the Analyst seems."

This transformed our Validator from a gullible peer into a skeptical auditor. It established a strict chain of evidence that became the backbone of the system's reliability. We learned a critical lesson: in an agentic system, you cannot trust; you must constantly, aggressively verify.

Case Study in Failure #2: The Agent Stuck in an Infinite Loop

Our next major failure was more subtle but just as dangerous. The system would start processing a report and then simply hang, burning through API credits in an endless loop.

After debugging, we found the culprit. Our Analyst Agent was getting stuck. Here's how:

The Scenario: The agent was processing a "noise" chunk that slipt through the cracks of our noise filtering algorithm (which is of course not fool proof) —a page header that said "DEMO CORP BUSINESS CONFIDENTIAL."

Correct Tool Behavior: It correctly fed this text to our RAG tool, which correctly found no relevant MITRE ATT&CK techniques and returned an empty result.

Agent Misinterpretation: The agent's core goal is to "Identify MITRE ATT&CK tactics." From its perspective, an empty result was a failure to achieve its goal. It thought, "I must have done something wrong. I'll try again."

The Loop: It would then retry the exact same chunk, get the same empty result, perceive it as a failure again, and repeat the process... forever.

The agent lacked the common sense to recognize that some inputs are simply irrelevant. It was stuck in a loop of perceived failure.

The Fix: Explicitly Defining Success

The solution wasn't a complex code change. It was a single, powerful line added to the agent's prompt, a perfect example of how prompt engineering is about teaching the AI how to handle edge cases. We added a new section to its ANALYSIS PRINCIPLES:

"No Results is a valid finding! Many chunks, especially headers, footers, or introductory text, will not contain attack techniques. If the mitre_rag_batch_search tool returns no results, that is a successful analysis for that chunk. DO NOT repeat the query. Simply move on to the next chunk in the sequence."

This small addition gave the agent a new rule for its world model. It taught it that finding nothing is not only acceptable but is a successful outcome for certain types of input. The infinite loop vanished instantly.

The Unsung Hero: Enforcing Strict JSON for a Stable Pipeline

Our final major prompting challenge was less dramatic but just as critical for building a stable system. Our agents needed to pass complex data to each other. We decided early on that the data contract between them would be JSON.

The problem? LLMs are trained to be conversational. They love to add helpful, human-like text around their answers. We would constantly get outputs like this:

"Sure, I've completed the analysis! Here is the JSON object you requested:

{ "finding": "..." }

I hope this helps! Let me know if you need anything else."

This conversational "padding" would instantly break the downstream agent, which was expecting to parse a raw JSON string, not a friendly chat message.

The solution was to be brutally, relentlessly explicit in our instructions. We added this "CRITICAL FINAL INSTRUCTION" to the prompt of every agent that needed to produce structured data:

"After completing your internal analysis, your final answer MUST BE A SINGLE, VALID JSON OBJECT AND NOTHING ELSE. The entire response MUST start with { and end with }. DO NOT add any introductory text. DO NOT wrap the JSON in markdown backticks. DO NOT add a 'Final Answer:' prefix."

This is the level of specificity required to force a conversational model to behave like a reliable, machine-readable API endpoint. It's not elegant, but it's essential for building a stable, multi-step pipeline.

Conclusion: From Chaos to Control

Taming our agentic crew was a journey into the strange, literal-minded world of LLMs. We learned that building reliable agents isn't about finding a single "magic prompt." It's about creating a system of interlocking guardrails:

A structured methodology like the Memory Bank to manage the chaos.

Clear personas to guide the agent's behavior.

Forcing a chain of evidence to prevent hallucination.

Explicitly defining failure to avoid infinite loops.

Enforcing a strict data contract to ensure stable communication.

We had taught our agents to be truthful and predictable. But our success created a new problem. The system worked on small tests, but it was fragile and starting to hit invisible walls as we scaled up to full reports. It wasn't the agent's logic that was failing anymore; it was the plumbing.

In our final post, we'll cover the advanced architectural battles of context windows, silent crashes, and the performance optimizations that took our project from a clever prototype to a truly robust system.